Containment Structures

Installation, 2026

The boundary between authentic reality and synthetic media is violently dissolving. In response, Containment Structures explores our escalating arms race against hyper-realistic AI imagery and video. The work bridges real-world cybersecurity with speculative technological countermeasures and interrogates how we might physically and cryptographically prove what is real. Viewers navigate a landscape of defensive architecture. Immersive light installations strip human identity down to the undeniable “biological watermark” of a pulsing heartbeat. Elsewhere, conceptual hardware solutions bind authentication to the quantum state of captured light. The installations visualise mutating adversarial noise layers engineered to fatally crash deepfake models and feature monumental symbols advocating for the intuitive democratisation of algorithmic safety. Ultimately, the show reframes digital defence: it is no longer just a complex technical hurdle, but a critical reclamation of truth in a post-reality age.

Rhythmic Dimming

Cybersecurity uses Remote Photoplethysmography (rPPG) to detect deepfakes by tracking the biological watermark of a human pulse. This installation mirrors that process, shifting from a red room into a pure 525 to 530 nanometer green light environment. In this void, the architecture vanishes and visitors become glowing green figures whose heartbeats are made visible through a rhythmic dimming of the skin. By stripping away facial identity, the work highlights how machine surveillance reduces human presence to the mechanical proof of a pulsing fluid.

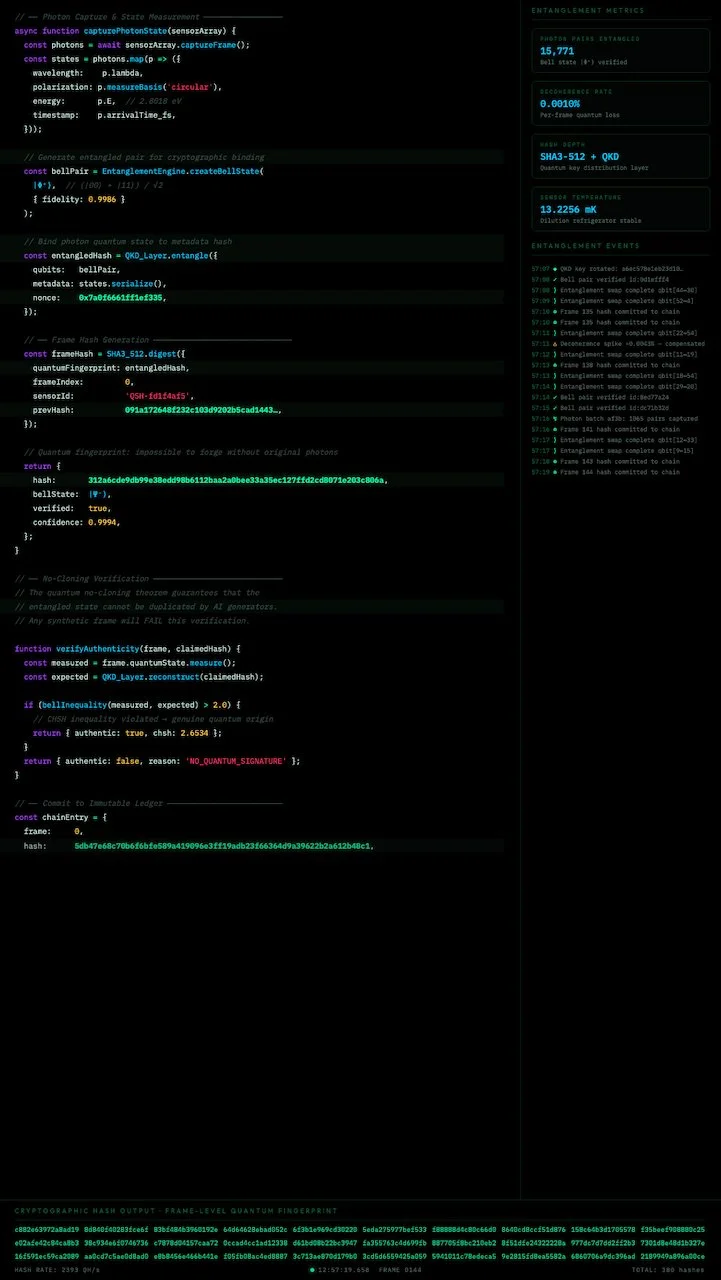

Coherence Window

A multi-sensor security camera captures the room from four angles. As light hits each lens, a speculative protocol designed by Novak called Quantum-Level Sensor Hashing entangles cryptographic metadata with the quantum state of the incoming photons. The four screens display this process in real time. Because generative AI creates video from mathematical models and never captures actual light, any synthetic or altered footage would lack this quantum fingerprint entirely, binding authentication to physics rather than software.

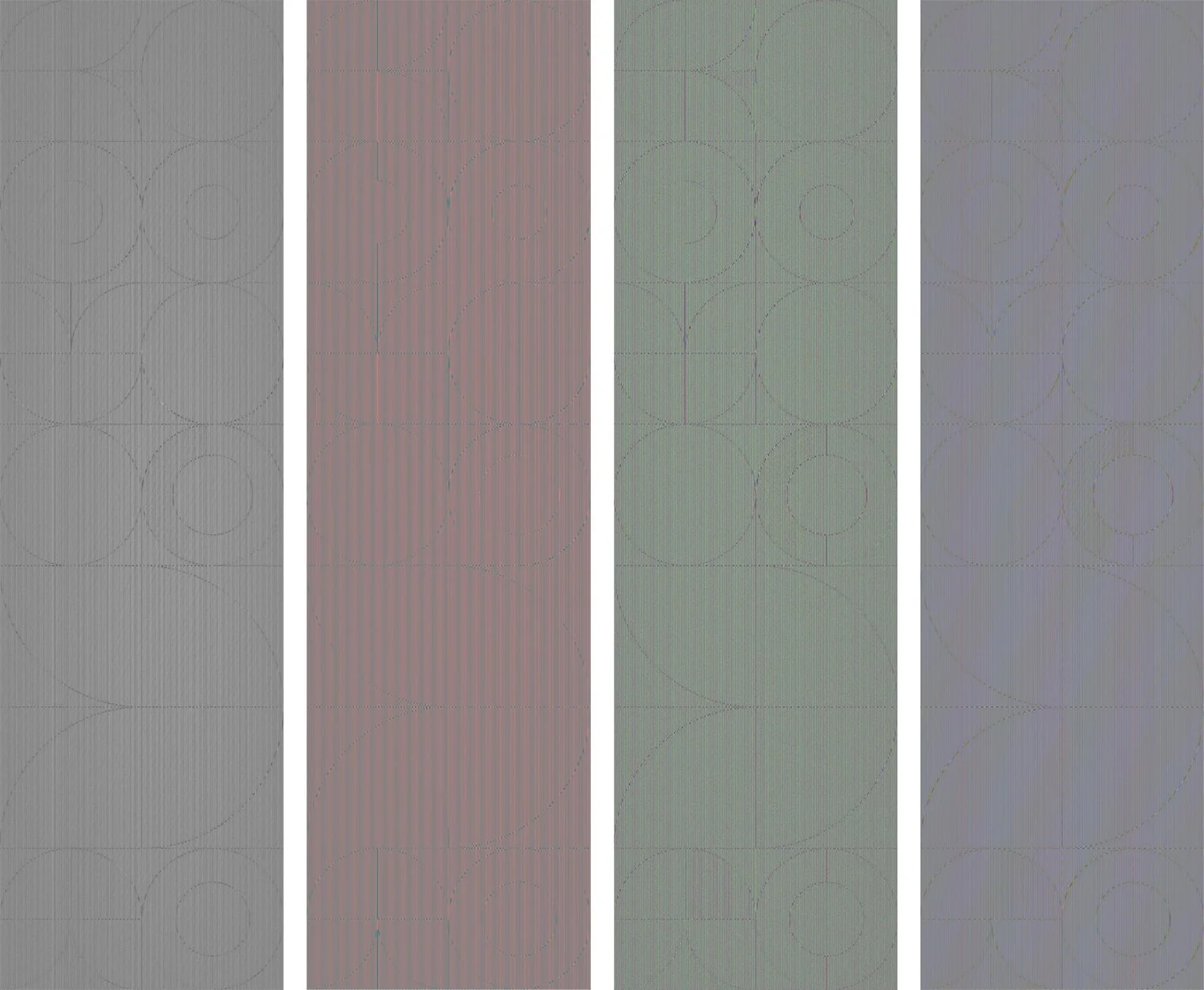

The Hydra Protocol

These nine perturbation maps visualise the invisible adversarial noise layers generated by the Hydra Protocol, a theoretical adaptive system developed by Novak. When applied to an image these patterns are imperceptible to the human eye, yet designed to cause AI models to collapse when attempting to train on the work. Where existing tools like Nightshade and Glaze apply fixed adversarial layers that AI can eventually learn to detect and remove, the Hydra Protocol mutates with every generation, shifting frequency bands, rotating phase offsets across colour channels, and cycling through different mathematical approaches to reading image structure. No two maps share the same noise signature, meaning a denoiser trained to remove one would fail against the others.

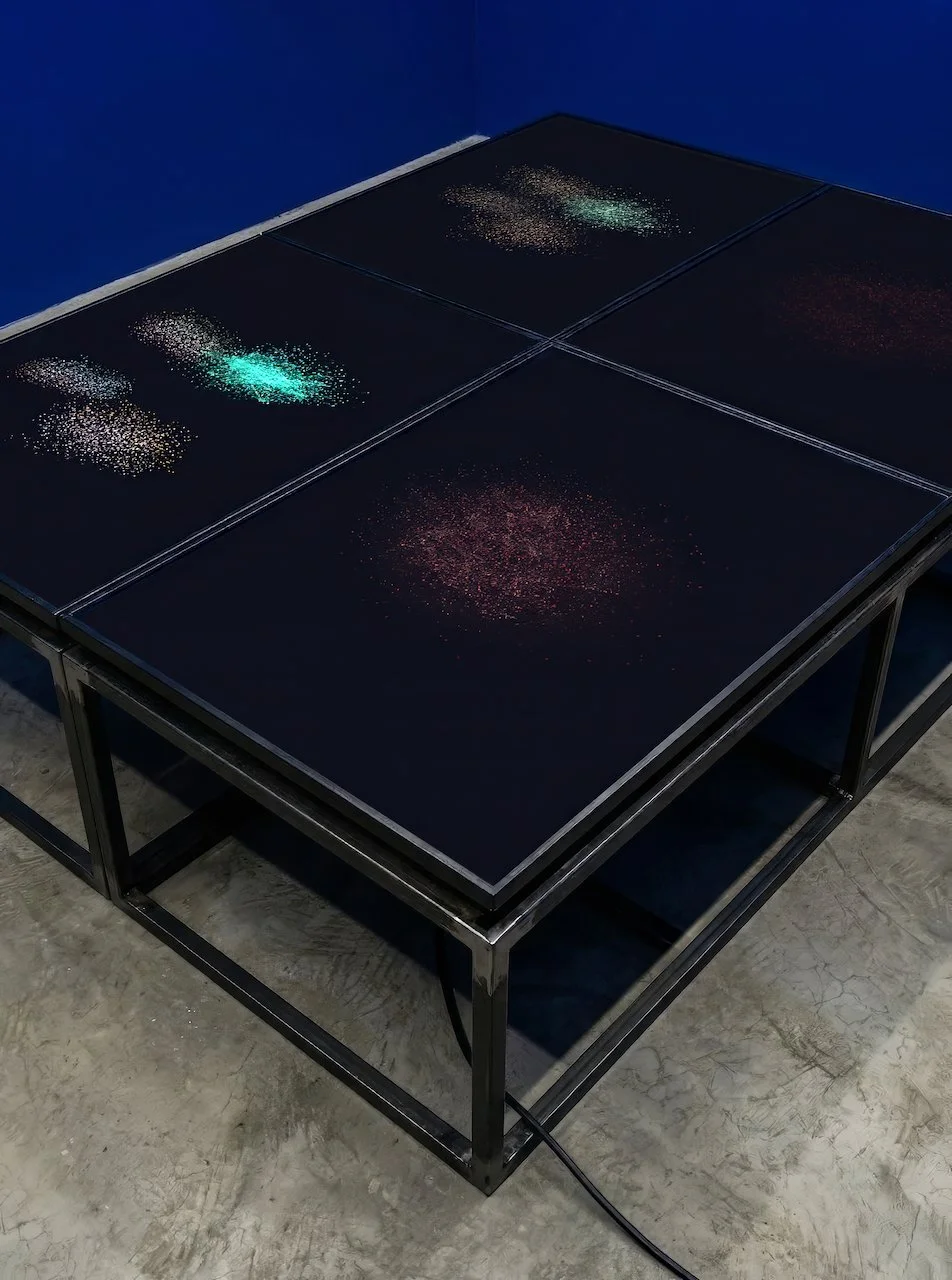

Beyond Use

This installation begins as a hypothetical application made by Novak. A user uploads a video, and the app embeds an adversarial noise layer into the footage, invisible to the human eye. If a generative AI model attempts to use that footage to create a deepfake, the layer triggers a mathematical collapse in the model's output, scrambling the video beyond use. The four screens each show one stage of this process, from the original upload through to collapse. The room is painted in the blue of a crash screen, the colour a computer shows you when its processes have irrecoverably failed.

State Change

This installation argues that protecting personal media from AI scraping should be as easy as tapping a screen. A giant smartphone toggle switch reduces complex algorithmic defense to a simple binary. Two accompanying abstract works visualise this choice: a pristine, symmetrical piece represents an unprotected file, while its warped counterpart illustrates an active adversarial layer scrambling machine readability.